An in-car voice assistant that helps create a safer driving experience through hands-off communication, accident prevention, and emergency assistance.

Investigate the next wave of voice assistant adoption. Imagine that Ford is launching a voice assistant to bring those capabilities to their customers.

Primary and Secondary Research, Concept, System Diagrams, Low and High Fidelity Prototype, Video.

Sketch, Illustrator, Keynote, InVision, Premiere Pro.

Gina Kim, Nathalia Kasman - 8 Weeks / Spring 2019

Driving while distracted accounts for a huge amount of accidents on the road. Whether the distraction is in the form of a phone, passenger, or accident, it puts both the driver and those around them at risk. This project investigates how to help people stay safe while driving through the use of in car voice-controlled interfaces.

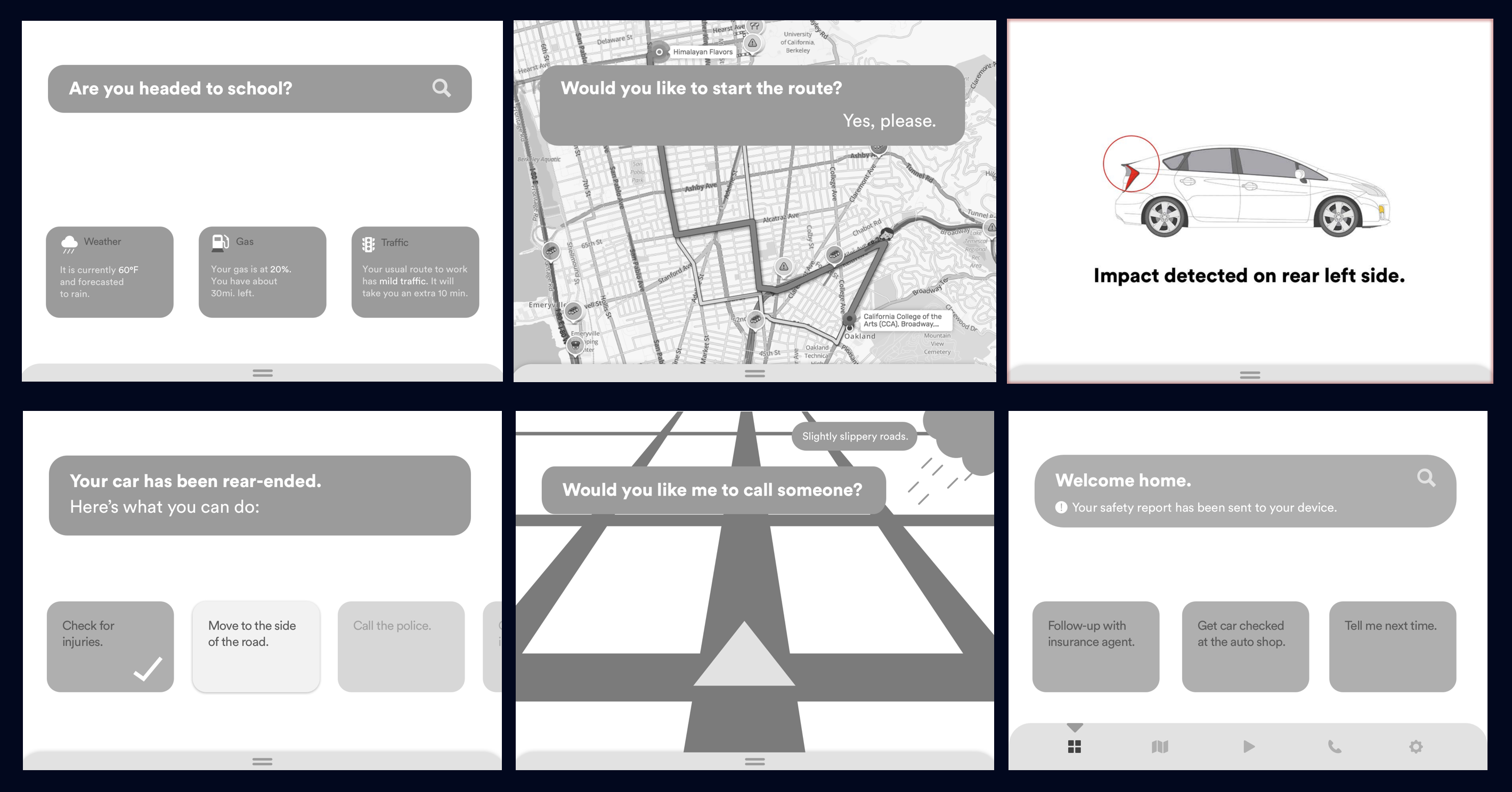

Smart Updates

When the system is switched on, drivers are alerted to the weather, traffic, and car conditions. These updates help drivers know what to expect before their drive.

Route Selection

Drivers can verbally input their destination, and select the route based off of their situation.

Safety Mode

When this mode is switched on, drivers get reminded of traffic rules and regulations. At the end of each trip, they receive a summary of their drive that reflect their positive actions and areas for improvement.

Accident Care

When things get out of hand, the voice assistant will help drivers through accidents and emergencies using on screen and verbal directions.

Understanding habits around driving.

Designing for hands-free driving required us to first understand the existing driving experience. To learn what areas a voice assistant could be beneficial in, we interviewed 8 different drivers, experienced and inexperienced, about what they enjoyed and disliked about the driving experience. In addition, we asked questions around phone usage, as it facilitated voice assistant use in the car, but was also a huge source of hands-on distraction.

After reviewing our findings, we grouped statements that stood out by common themes. We discovered that the sense of control and autonomy driving brought were major highlights, while routine acts (e.i. getting gas) and emotions such as anxiety and road rage were some pain points. Phone usage varied among interviewees, but reoccurring uses were for entertainment or acquiring information.

Here were our four main insights:

Having control over your transportation and its environment makes the ride more enjoyable.

Driving can become mundane due to necessary but repetitive actions, so drivers resort to external stimulants for entertainment.

Phones have become part of the driving experience due to people’s growing reliance on its functions.

Drivers would like cars to understand the car’s environment and adjust or alert drivers accordingly.

Leveraging driving habits to increase safety on the road.

We explored two concepts around integrating the phone and voice into the car. Our first concept targeted long time drivers who had daily commutes, while the second was aimed towards new drivers who were still in the process of learning.

The feedback we received on our concepts was that the two sets of features could benefit both user groups. Thus, we combined our concepts under the umbrella of safety. We hypothesized that by providing the driver information about their environment, whether that was weather updates or the traffic laws in a particular zone, the sense of control and security would increase.

However, we had to keep in mind that while the features would help both groups of users, their core needs still differed. We mapped out what the experience would look like for new drivers in contrast to the commuter.

For the new driver, driving is still a somewhat unfamiliar territory, and can cause some nerves. The voice assistant can help alleviate these worries by providing updates around road conditions and traffic rules.

The commuter is more confident in their ability on the road, but can still run into unpredictable situations. The voice assistant helps prevent accidents by suggesting safer routes, and provides assistance when situations do go awry.

Validating our concepts with real drivers.

We went through multiple rounds of usability tests, each increasing in fidelity. Participants were walked through a scenario that involved driving to a destination and getting into an accident along the way. We acted as the voice assistant, the narrator, and the screens.

Round 1 - Paper prototype and props found around the room were used to simulate the driving experience.

Round 2 - Digital wireframes displayed on a TV. We briefly explored having the screen on the windshield, but decided not to move forward with the idea due to the visual confusion it created and the lack of flexibility the space provided.

-p-1080.jpeg)

Round 3 - Asked participants to drive safely around a residential area and interact with our prototype verbally. Interactions were mimicked in real time on a tablet according to the situation and user input.

Users wanted different routes to be defined more clearly.

Our initial prototype had the routes labeled just as "safest," "fastest" and "recommended," which confused a lot of users. In our final prototype, we added some metadata under each label for clarity. For example, the safest route could be roads that were the least busy on a rainy day.

Users wanted the voice assistant to solve problems for them, instead of just telling them about the situation.

When incidents happened, the voice assistant would alert the user to the situation. However, users wished that the assistant would suggest how the situation could be solved, rather than simply state what was going on. Based off of this feedback, we explored how the assistant could play an active role during accidents to aid the driver (e.g. calling the car insurance company).

Some users found the Safety Report to be condescending. They wanted facts instead of suggestions, as proper driving is too subjective.

Based on the user's drive, the Safety Report outlines potential areas of improvements to increase driving safety. Users found the report to be condescending, and noted that the definition of good driving is very situational (one might slam on the breaks to prevent a collision). We worked to provide an objective report of their trip and removed the subjective suggestions.

Users wanted minimal/no touch interaction with the interface if possible. However, they still wanted the ability to manually switch on or off the voice assistant.

We observed that users were distracted by reading or trying to interact with the screens while driving. We remedied this by making the main point of touch interaction, the menu, slide out of view when the car was in motion. We also maximized the amount of information presented on the screen at one time, reducing the need for the user to prompt one chunk to appear after another. Lastly, users wanted the ability to manually turn on or off the assistant. To them, it provided a sense of security when they wanted to system completely off.

If we had more time.

...make driving a less monotonous activity?

...consider drivers’ emotional states?

...further integrate the physical and digital features of a phone?

These are areas we want to explore further, but were unable to due to time constraints. In the next evolution of the driving assistant, we want to expand preventative safety measures to encompass the driver's emotional states and boredom, understanding when certain actions can be red flags. We also want to leverage connecting the phone to the assistant, both physically and digitally, to transform the use of the phone in the car.